Measuring Marketing’s Effectiveness in 2021

Uncertainties Around Marketing’s ROI

Measuring marketing’s effectiveness remains a CMO’s top priority in 2021. Unlike almost every other part of operating a business, measuring return on marketing remains frustratingly squishy. The simple reasoning for this is that marketing professionals work with prospects and customers who are not totally “known”. For all the attempts to make marketing deterministic—in other words, to link together media, prospects, and customers into a totally quantifiable ecosystem—this will probably (hopefully?) never be achieved. This uncertainty comes with the territory for CMOs.

Still, this lack of a clear answer on “what is marketing doing for us” is something that continually frustrates finance-oriented executives—as it has for almost a century. Although, it’s not like things haven’t changed. Digital marketing has made its field more measurable, particularly for firms with smaller budgets. Single-channel efforts (e.g., paid search) are very measurable, particularly over time or between customer or product segments. However, this “ultra-measurability” also has the potential to be abused by companies selling inventory—whether these be search engines, publishers, or marketing performance agencies. Beware the rep who asks what your allowable cost per lead is within the first ten minutes of a conversation. Multi-channel measurement, though, is much harder.

Difficulties with Multi-Channel Measurement

Identity-resolution services, along with social media networks that harvest vast amounts of data about customers, can get around some of the squishiness of measuring marketing’s effectiveness across channels. Consumers at least state that they are wary of all-knowing ad networks. Yet, at the same time, their actions say that they still love using Facebook, Instagram, Twitter, and TikTok. The brief Facebook boycott of July 2020 probably made some consumers feel good about a few brands, but those brands are still advertising on Facebook and Instagram.

Companies like Google and Apple seem to be positioning on privacy. In particular, Google’s recent announcement about ditching third-party cookies in Chrome by 2022 will put a big wrench in digitally-based multi-touch attribution efforts (Apple did this with Safari last year.) However, this arguably only helps Google, as their search empire remains unaffected. They still own your data as a Google user. So, as Google chomps up more and more of the digital landscape, the move to shut down third-party cookies is self-serving at best and anti-competitive at worst.

Even in a fictional universe of eternal third-party cookies and a democratized digital data environment, multi-channel marketing measurement has problems. Non-digital channels—think direct mail, out-of-home, and local marketing—can be measured in silos, but cannot really be linked to a comprehensive digital identity system/graph. CMOs who chase perfection in digital attribution run the risk of spending millions on technology that will be obsolete before it is deployed.

Measuring & Optimizing Brand Investment

Beyond multi-channel measurement, an even tougher challenge is measuring and optimizing long-term brand investment. No amount of digital user tracking technology will never be able to solve this problem. Companies that invest a lot in brand advertising do not do so because it makes the phone ring or drives consumers to a landing page. They invest in brand advertising with an expectation that consumers’ preferences are shifted over long periods of time by continued exposure to compelling brand messaging.

Brand messaging generally does not activate functional or rational parts of the brain (System 2, using Daniel Kahneman’s framework in Thinking Fast, Thinking Slow). It is emotional and subconscious (System 1). It’s no coincidence that large brands spend hundreds of millions of dollars on relatively mindless ads with repeated characters (e.g., Geckos, celebrities) and musical jingles. They’re not trying to make you think, they’re trying to make your reptile brain build subconscious pattern recognition firmware.

The difficulty of measuring this most certainly does not mean you should not do long-run, System 1 advertising. This is actually a very common trap for companies obsessed with measurement. Instead of taking a big swing at brand advertising that might not show results for years, more and more dollars chase performance marketing, which allows circling the drain of allowable cost-pers. Eventually, a deterministic decline sets in as upper-funnel weakness eats away at acquisition. When that happens, it’s often too late. Fortunately, there are really good ways to measure marketing effectiveness for System 1, emotional, brand advertising.

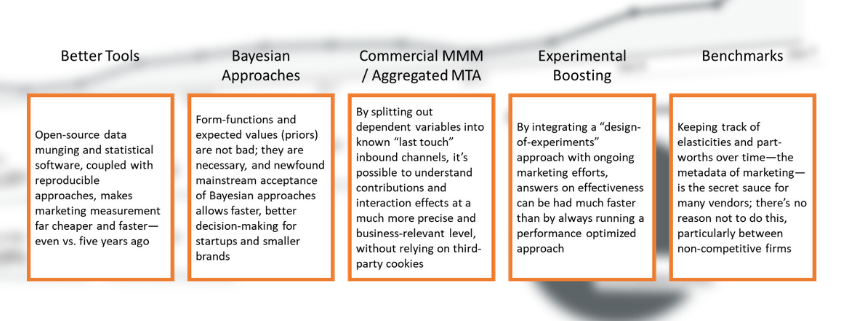

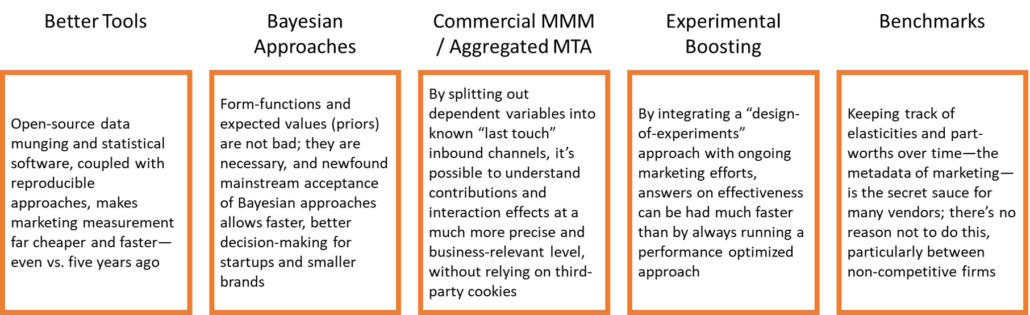

5 Key Developments in Measuring Marketing’s Effectiveness

Marketing is far more measurable and scientific today than it was even five years ago. This is due to both technological advancements (mainly digital) and improvements in measurement techniques.

Marketing mix modeling (MMM) has been in use since the 1970s. Even ten years ago, MMM was performed mainly by large consumer brands with $100M+ advertising budgets, mainly on television. The technique is fairly simple; stimulus data (impressions and spend), response data (sales), and controlling variables (economic data, competitive spend) are structured in a consistent time series data set, usually arranged by a panel (like geography). MMM is still not used by many smaller companies, or by companies who were “born on the web”—but it should be.

In some ways, MMM hasn’t changed at all in forty years; the basic statistics remain the same. Even so, MMM has become a much more powerful tool for marketers at all kinds of companies due to five key developments.

- The data, software, and algorithms are orders of magnitude cheaper and better

Ten years ago, most MMM models were done using expensive, proprietary software. Today, the software is free. R (the better choice for MMM in my opinion) is totally free, as are packages for handling econometric time series data and generalized regression approaches. Data munging—the process of gathering and structuring data into a shape that is useful—is also far easier using packages like dplyr (R) and Pandas (Python). Perhaps most importantly, the data munging process can now be made totally reproducible. A data pipeline built from text files looking at unstructured data in a data lake can turn gobbledygook into clean panel data in minutes—and changes can then be documented using a version control system like Git.

- Bayes’ theorem has made common knowledge respectable

Early marketing science textbooks knew about the “s-curve”—the idea that not all marketing investments behave like the curves in economics textbooks with constant elasticity between stimulus and response. Instead, a lot of marketing requires a significant investment before increasing its effectiveness and then resumes “normal” behavior. In the past, frequentist purists laughed these concepts out of the room unless they were sitting on years of data at very low and very high spend levels. This method made it very hard to say much of anything about long-run or up-funnel marketing channels. Nevertheless, as Bayesian approaches have become more commonplace and accepted, marketers have a much more flexible set of tools to model real–world marketing responses.

- “Commercial MMM” / Aggregated MTA is extremely powerful

Last-touch attribution is seen as primitive, but when combined with MMM techniques it’s an incredible tool. The idea is simple; instead of modeling one dependent variable (order, sales, etc.), break the dependent variable out into mutually exclusive, collectively exhaustive dependent variables identified by their inbound channel—or last known marketing touch. For example, direct mail, direct response television, paid search, organic search, etc. This allows marketers to know how each channel is driving other channels, and gives a true assessment of contribution, without relying on third-party cookies.

- Experimental boosting can supercharge inference

Combining econometric time series approaches with deliberate experimentation can drive far better measurement results. The idea is to create factorial differences in investment in different time periods, or if possible, across different panels. This creates the signal models need to tease out real relationships and interaction effects. Some companies are unwilling to do this, afraid that they will negatively impact business results. However, in our experience, adding intentional variation to marketing timing, spending, and targeting as an ongoing design of experiments drives faster, deeper learning than always optimizing and trying to read faint signals.

- Benchmarks

Benchmarks about how different channels usually work—by industry, audience, and business stage—are pretty consistent. This is the dirty little secret big analytics consulting companies don’t want to tell you. They know what the models will do, plus or minus 20%. In fact, we are sometimes shocked at MarketBridge when people who have been down the MMM road a few times reject a result because “it doesn’t feel right”. That being said, robust databases of benchmarks on measuring marketing’s effectiveness are starting to be developed. We have one at MarketBridge. By using benchmarks to jump-start and sniff test models, much better results are possible.

Practices in measuring marketing’s effectiveness are becoming more exciting in 2021. For the first time, practitioners are moving beyond the arcane knowledge and black boxes to a set of standardized tools and best practices that really work. However, as marketing becomes more quantitative (maybe even more so than finance), it’s also important to remember that marketing will never be deterministic. Measurement is great, but an obsession with certainty will ultimately lead to sub-optimal results.

Measure marketing’s impact across all channels

We‘ve been helping consumer and business-to-business brands get to the core of what works and what doesn’t for over 20 years. Learn how we combine science and empirical discipline to help you determine where to place your dollars.